Copy paste your audio file(s) that you want to convert into the data folder. in the terminal, and create a new folder called data. With ffmpeg installed, you can now open your whisper.cpp folder in Finder using open. With HomeBrew installed you can install ffmpeg, a popular and open-source media converter that runs locally.īrew install ffmpeg (This can take a while) Post-processing isn’t too hard though if you’re familiar with the command line, and following this guide should help even novices post-process audio data in no time. This version of Whisper requires a very specific WAV file format with 16kHz audio samples to process, and it includes no convenient post-processing built-in like some versions of Whisper. Post-processing audio files for analysis by Whisper Now Whisper is ready! However, processing audio files is a bit finicky, and may require some post processing of the audio files to allow for analysis by Whisper. If all goes well you should see output like below. Which will also run a test audio file through the model. This will take a while depending on your network connection, and the large model requires around 3GB of storage. I prefer using the large model, but the base.en model should work quite well and presumably be much faster. If any of the below instructions differ from the README, please refer to that instead. With that done, you can now cd whisper.cpp. To install Whisper, open up your terminal and clone the repository: There is no native ARM version of Whisper as provided by OpenAI, but Georgi Gerganov helpfully provides a plain C/C++ port of the OpenAI version written in Python.įirst, make sure you have your build dependencies set up using xcode-select -install, as well as HomeBrew installed on your Mac.

Usually large neural networks require powerful GPUs such that for most people its limited to running on cloud software, but with the M1 MacBooks, and I suspect more powerful X86 CPUs, it will run with acceptable performance for personal use. Since its original release, OpenAI has open sourced the model and accompanying runtime allowing anyone to run Whisper either on cloud hardware, or locally.

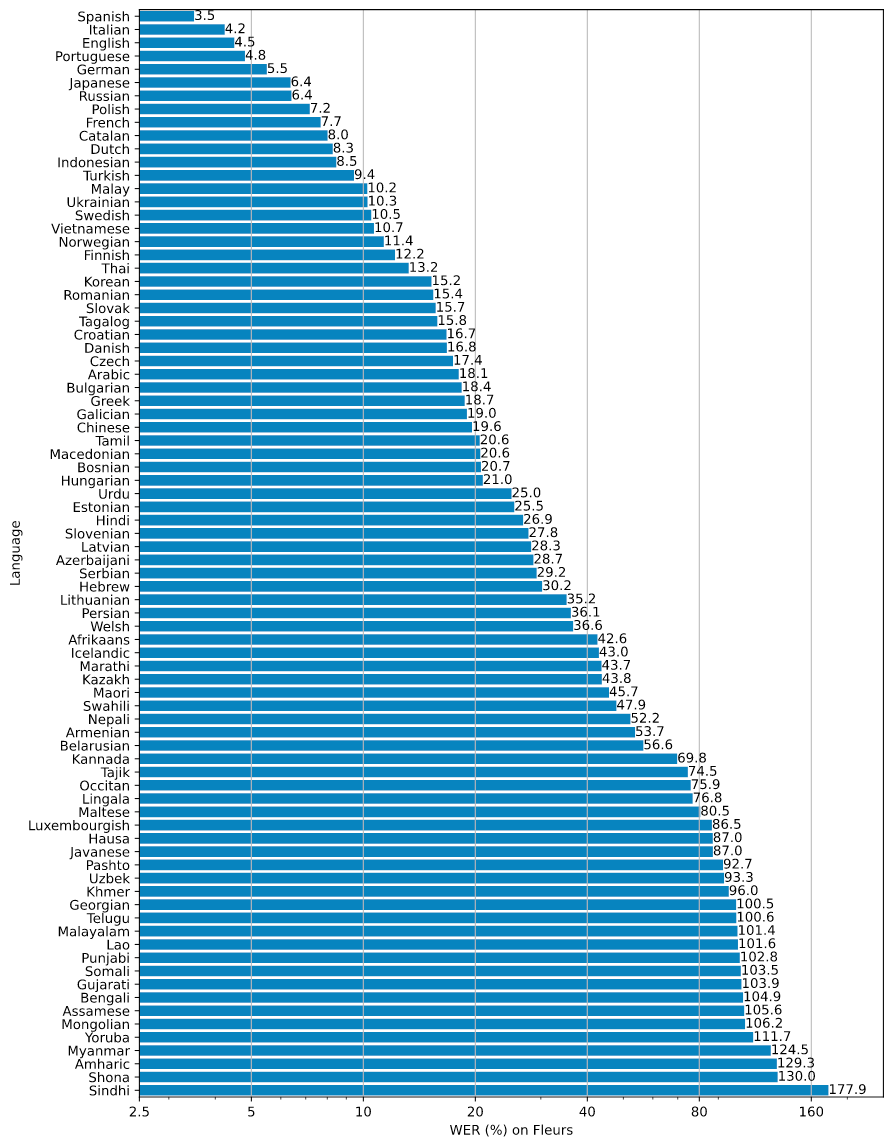

Whisper is a trained and open-source neural network for speech recognition that reaches impressive levels of accuracy for a lot of languages. However, to do so requires some finessing, and I hope this blog can help you along the way, and provide some tips for post-processing the data for greater accuracy. Running Whisper locally saves you from exposing potentially sensitive information to a third party, and on an M1 Mac the performance is pretty decent, with run times on an M1 Max being around 1/10th the actual playback time of the audio file. However, running Whisper in the cloud, or using cloud transcription services, can cause compliance issues such as with GDPR. If you ever find yourself having to transcribe hours upon hours of audio data, for example for your thesis or user research, Whisper might be of help.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed